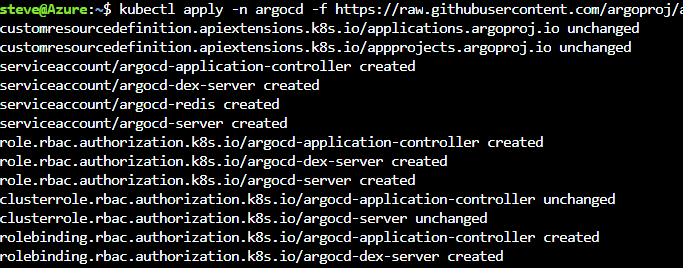

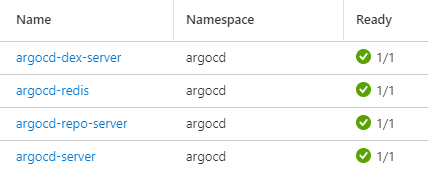

In my last post on Argo CD with AKS, I mentioned the next post would explore deploying an app via Argo CD. Well, in this post we are going to do just that. I am going to walk through deploying an app from Argo CD to AKS. Note this same process would work for any Kubernetes cluster. This is not going to be a long post as the process is straightforward.

First of all, you can deploy an app from the Argo CD web UI or CLI. Ready your application in a Git-based repository. It does not matter what source control system you use for your repository as long as it is Git-based. You can use Azure DevOps, Gitlab, Bit Bucket etc. In my case I use GitHub. To deploy an app you need to point to a Git repository of either K8s manifest, Helm, or Kustomize. In this blog post I am going to keep it simple and use the Hello K8s app from Paul Bouwer. Ok, now let’s jump in.

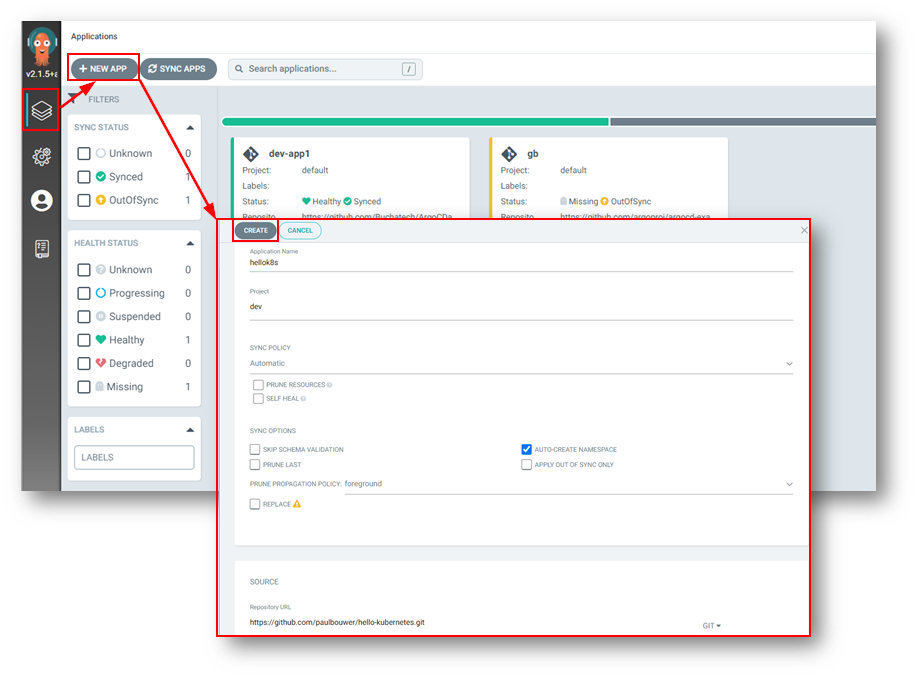

Here are the steps for Deploying an App to Argo CD within the Web UI:

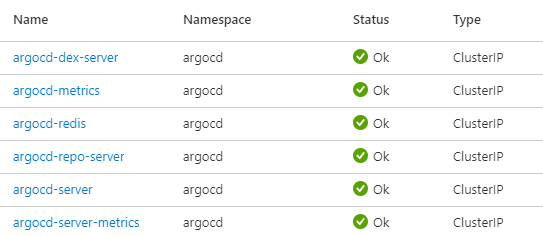

- In the Argo CD web UI ensure you are on the Applications page

- Click the + NEW APP button

- Give the app the name hellok8s, use the project default (I used a dev project in my example), select Automatic for the sync policy, check AUTO-CREATE NAMESPACE

- On Source for the Repo URL use https://github.com/paulbouwer/hello-kubernetes.git & select deploy/helm/hello-kubernetes for the path

- For the DESTINATION select https://kubernetes.default.svc for the Cluster URL and use hellok8s for the namespace

- Leave all the defaults under HELM

- Click the CREATE button at the top of the UI

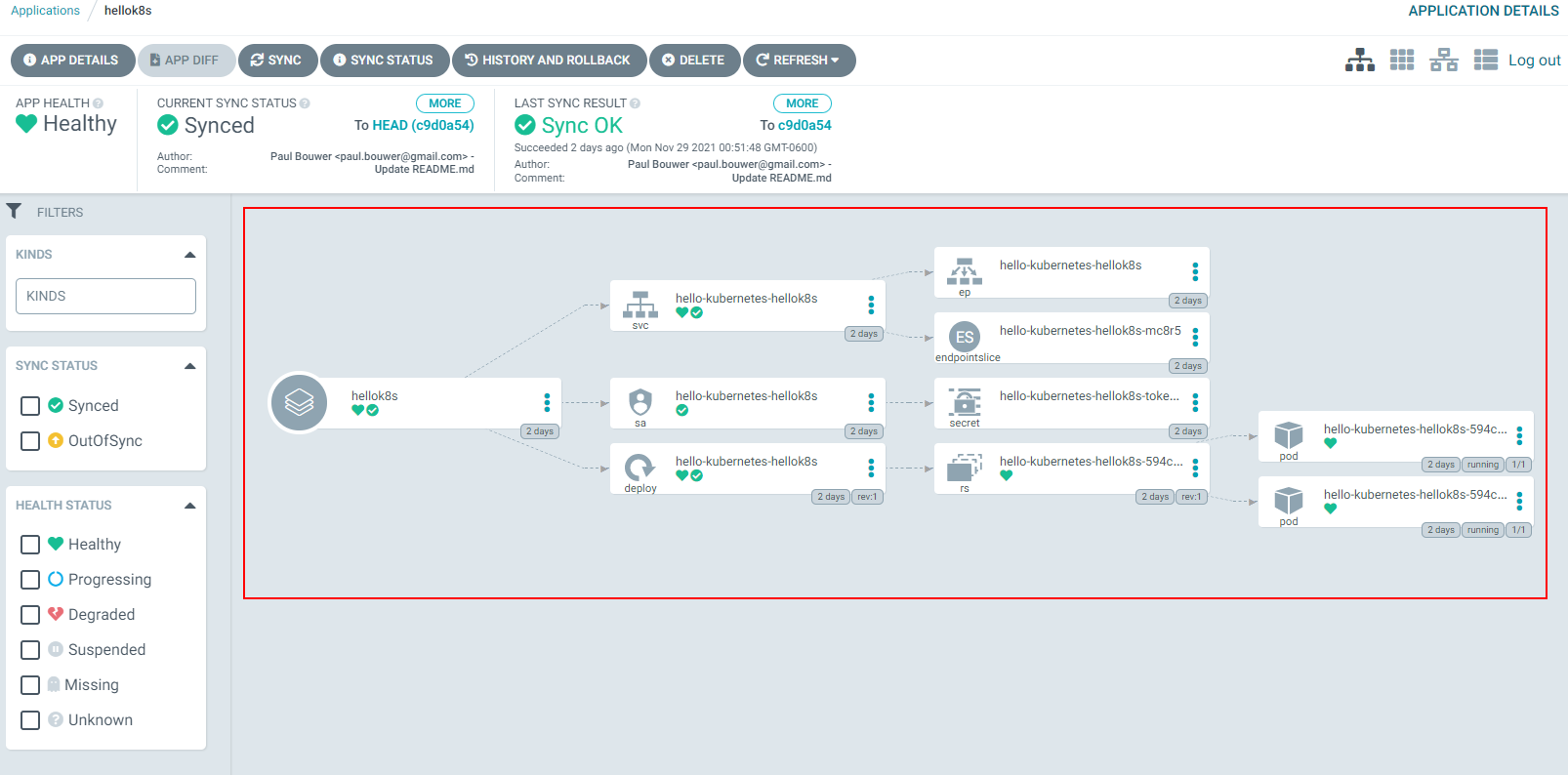

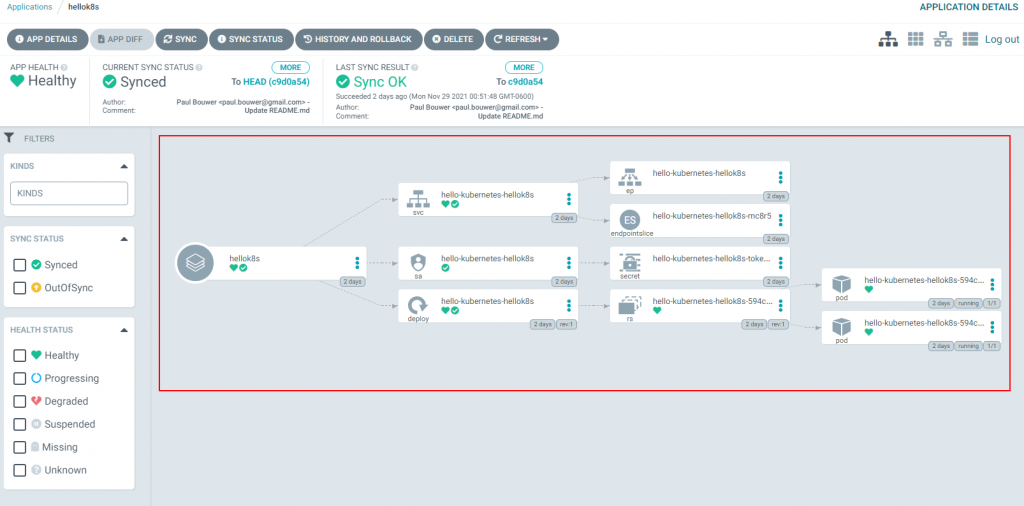

Once the app is deployed it will look like this:

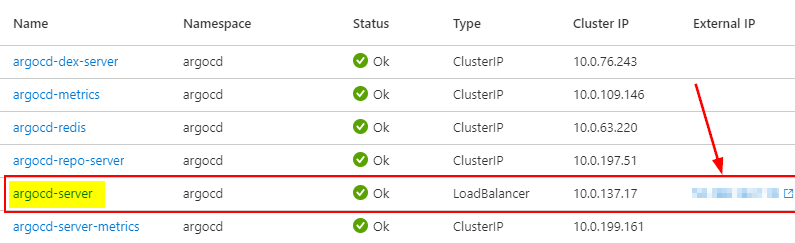

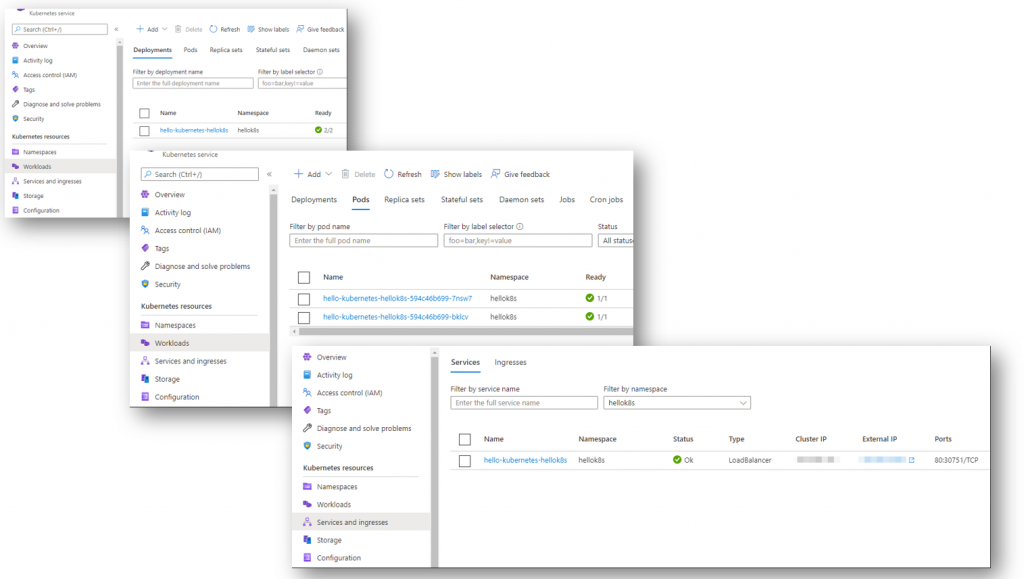

You can view the resources in AKS now. In the following screenshot you can see the deployment, pods, and service of a load balancer type.

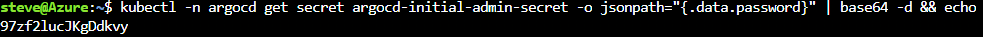

You can also speed things up by deploying your app via the Argo CD CLI. This will accomplish the same goal as you would deploying the app via the Argo CD Web UI.

Deploying an App to Argo CD from the Argo CD CLI:

argocd app create hellok8s –repo https://github.com/paulbouwer/hello-kubernetes.git –path deploy/helm/hello-kubernetes –dest-server https://kubernetes.default.svc –dest-namespace default

That wraps things up for this post. Check back soon for more posts on Argo CD, GitOps, Kubernetes, and Azure topics.