The energy around Microsoft Build is always unmatched, but this year’s event holds a special place for me. I am excited to share that I will be attending Microsoft Build 2026 for the first time not just as an attendee, but as one of the Microsoft Experts in the Expert Meetup!

If you are heading to San Francisco, you can find me and a fantastic group of Microsoft Full-Time Employees (FTEs) and fellow Microsoft MVPs over in the Festival Pavilion. This dedicated area is designed for deep dives, unfiltered technical discussions, and collaborative problem-solving.

What is the Expert Meetup?

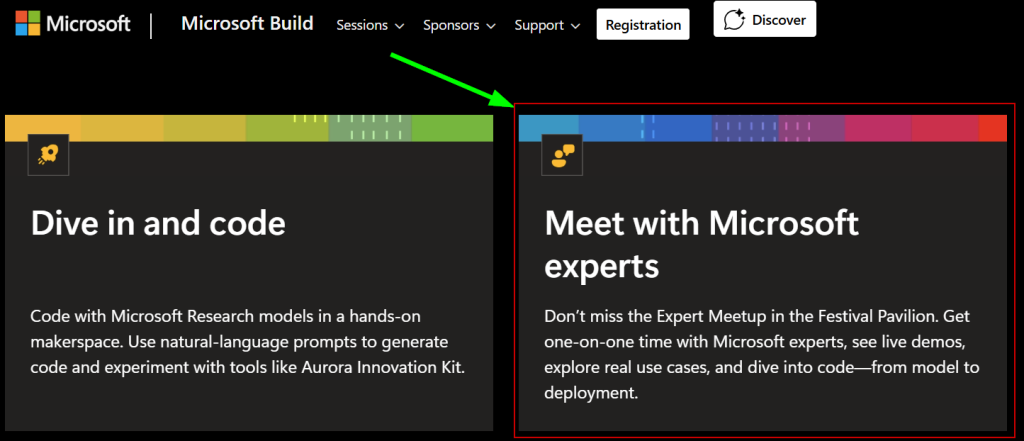

The Expert Meetup is all about direct, one-on-one connection. It’s a space where you can get dedicated time with folks who live and breathe this technology every day. Whether you want to see live demos, explore highly specific real-world use cases, or literally dive into code from foundational models all the way to production deployment this is where it happens.

My Focus Areas: Cloud Native, Open Source, and Beyond

While the entire expert area spans an incredible lineup of modern technology domains including Azure Application Services, AI-Ready Infrastructure, Governance & Compliance, and Agentic Modernization but my primary focus will be centered on Cloud Native architectures.

I’ll be on hand to chat about everything from Kubernetes, Azure Kubernetes Service, and container strategies to microservices scaling and the modern developer expericience. Additionally, we can talk about the following technical areas including:

- Cloud Native & Open Source: Integrating OSS tooling seamlessly into your enterprise ecosystem.

- Artificial Intelligence: Bridging the gap between cloud-native infrastructure and AI-ready workloads.

- General Azure Architecture: Best practices, optimization strategies, and landing zone foundations.

Let’s Connect

Events like Build are fundamentally about the community. If you are a former Microsoft colleague, a fellow Microsoft MVP, a GitHub Star, an enterprise developer, or an cloud/cloud native enthusiast lets connect! Stop by the Festival Pavilion, grab me for a coffee, or ping me ahead of time so we can sync up.

Let’s talk code, AI, Cloud Native share what we are building, and figure out how to solve your toughest engineering challenges together. See you in the Festival Pavilion!