Many organizations have embraced DevOps and adopted technologies like Kubernetes, cloud computing, and Infrastructure as Code (IaC) tools like Terraform or Pulumi. Despite these efforts, they often face challenges in delivering on the promises of DevOps and cloud-native. Platform engineering has emerged as the next step in the evolution, breaking down barriers and empowering developers to bring software to the market faster and more efficiently.

Recently I have been working on content to help educate and share my knowledge in this space. I am happy to announce two new pieces of content on Platform Engineering including a new course and a new blog.

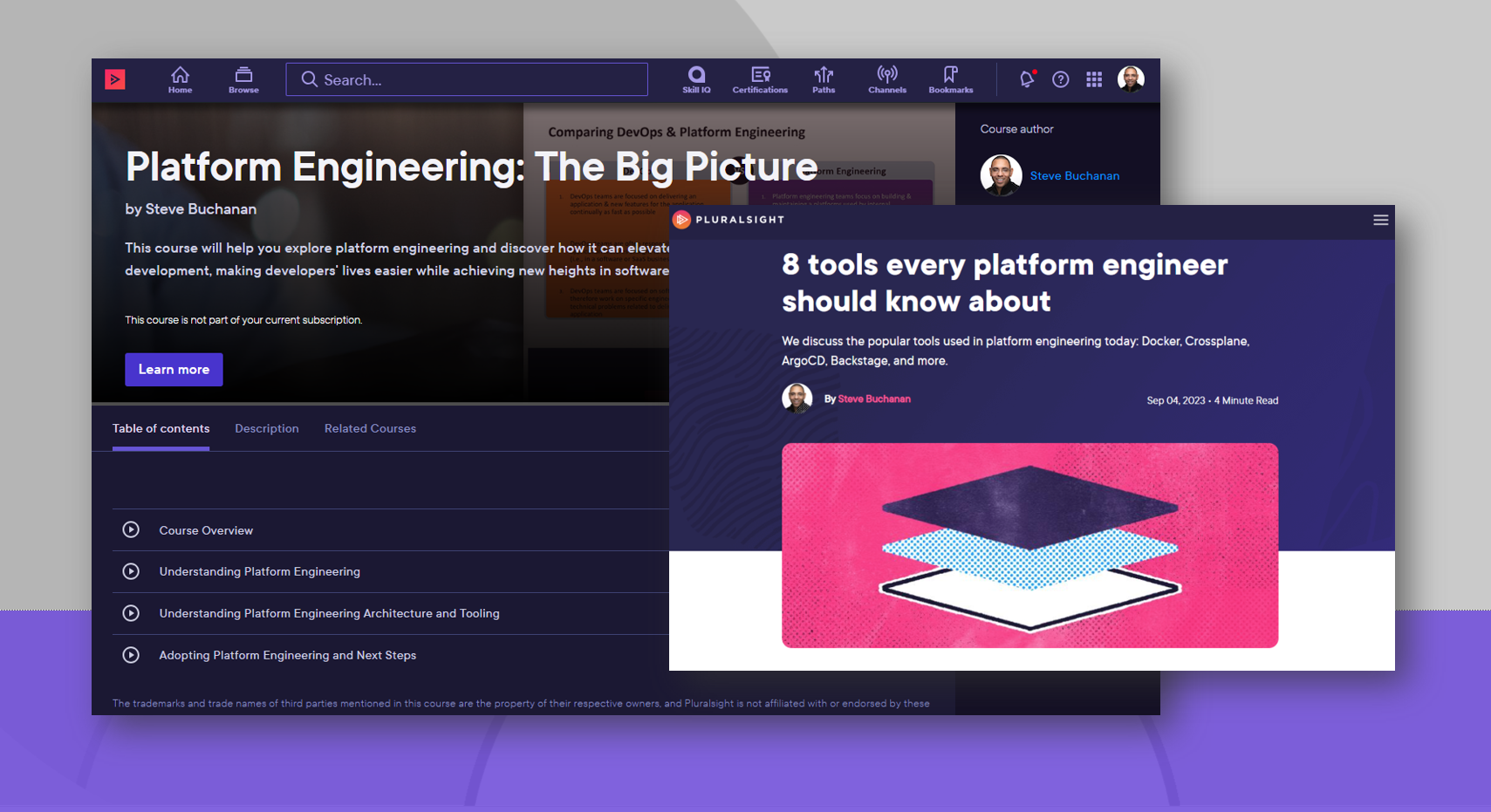

Course: Platform Engineering: The Big Picture

Last week my 22nd course was published on Pluralsight! I am really excited about this course because it covers something that has been really hot in tech lately. It is about Platform Engineering. Platform Engineering has emerged as the next step in the evolution, breaking down barriers and empowering teams. Being someone that works with Kubernetes and cloud native this course was right up my alley because I work directly in this space.

The course is titled “Platform Engineering: The Big Picture“. This course will help you explore platform engineering and discover how it can elevate cloud-native development, making developers’ lives easier while achieving new heights in software delivery. Platform Engineering unifies and centralizes toolchains & workflows for self-service making developers’ lives easier while achieving new heights in software delivery.

In this course, you will gain an understanding about Platform Engineering, its benefits, architecture, tooling, workflow and how to adopt it.

Some of the major topics covered in the course include:

- A Platform Engineering overview and why it’s needed, how Platforms enhance DevOps and streamline cloud native.

- A comparison of DevOps, SRE, and Platform Engineering.

- You will learn about Platform Engineering Architecture, its tooling landscape, and Internal Developer Platforms.

Check out the “Platform Engineering: The Big Picture“ course here:

https://www.pluralsight.com/courses/platform-engineering-big-picture

I hope you find value in this new Platform Engineering course. Be sure to follow my profile on Pluralsight so you will be notified as I release new courses!

Here is the link to my Pluralsight profile to follow me:

https://www.pluralsight.com/authors/steve-buchanan

Blog: 8 tools every platform engineer should know about

I am also excited to announce my second Platform Engineering-related blog post on Pluralsight. This one is titled: “8 tools every platform engineer should know about”. In Platform Engineering there are a lot of tools that can make up a platform. It can be confusing and hard to know what tools to focus on in the Platform Engineering space. In this blog post, I list 8 tools that are a must-know when you are in the Platform Engineering space.

👉 Read the blog post here:

https://www.pluralsight.com/resources/blog/it-ops/top-platform-engineering-tools