Hey everyone, today I’m super excited to tell you about a recent episode of Azure Friday that I was lucky enough to be a guest on.

Azure Friday is a weekly video series hosted by the legendary Scott Hanselman, where he interviews experts and developers on various Azure-related topics. In this episode, we talked about Automated Deployments for AKS, a new feature that makes it super easy to deploy your apps to Azure Kubernetes Service.

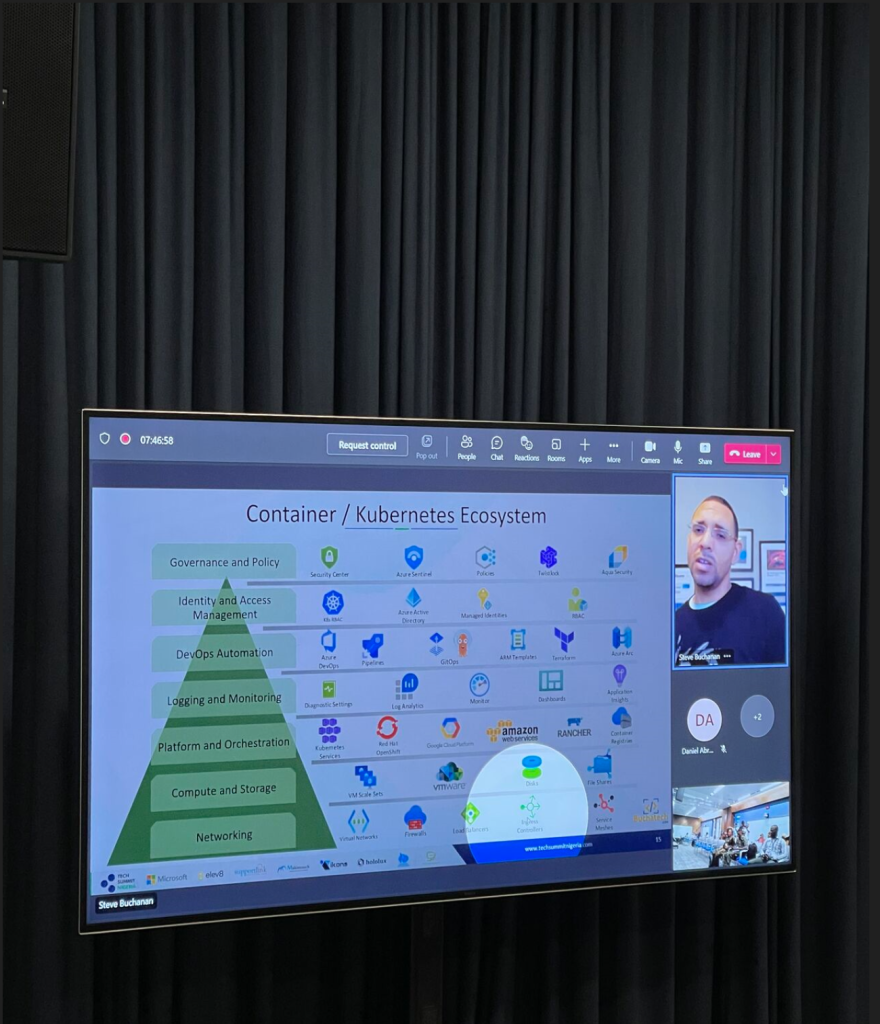

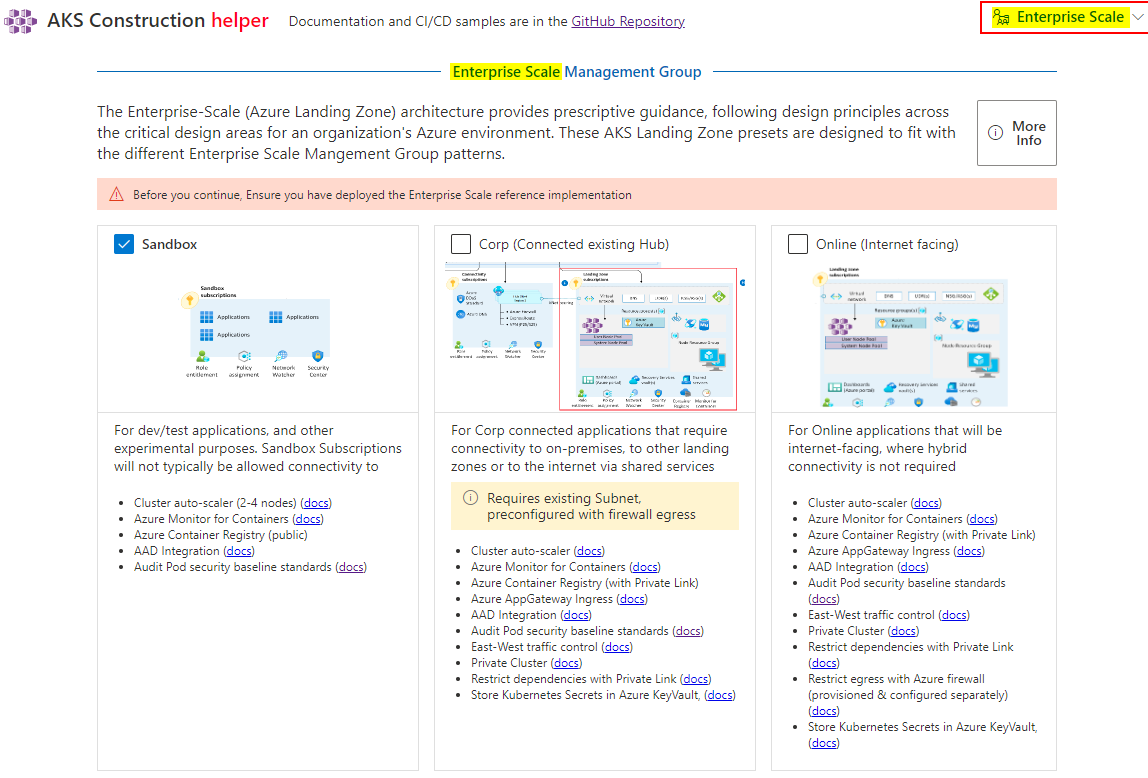

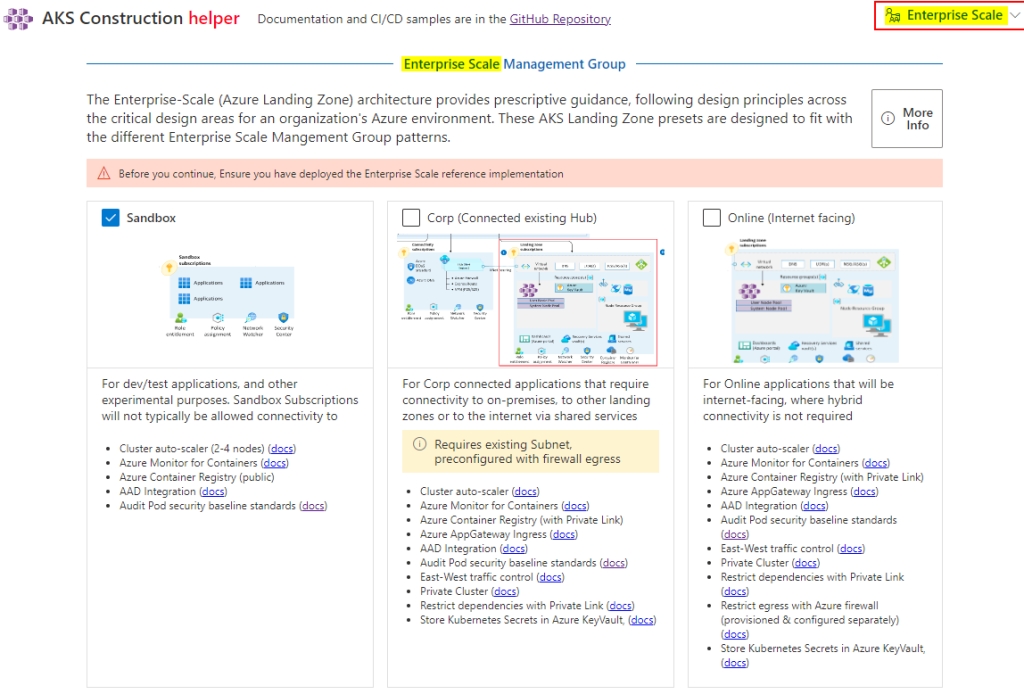

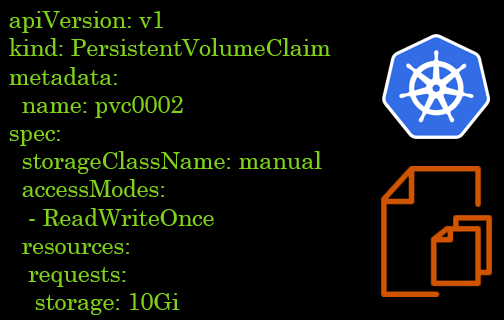

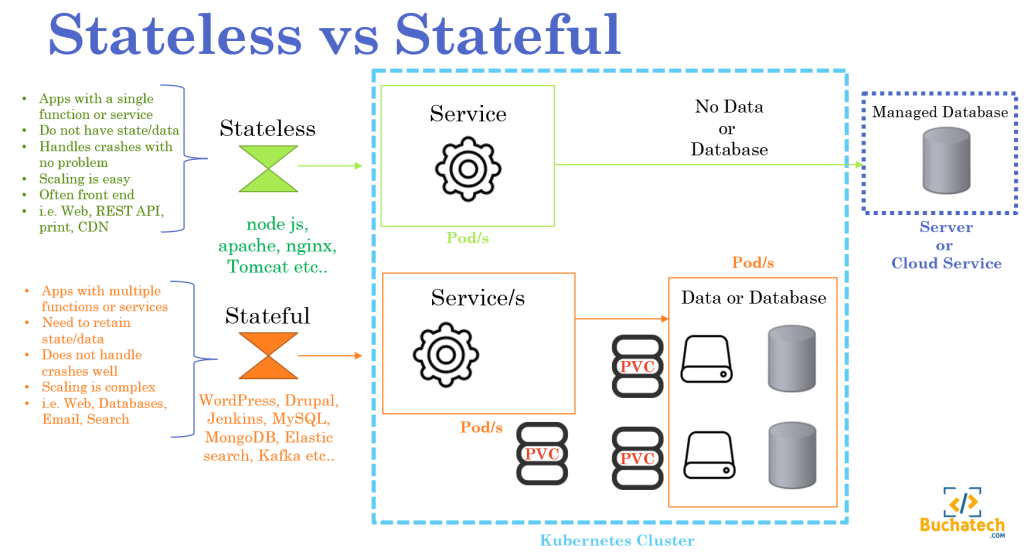

If you’re not familiar with AKS, it’s a managed Kubernetes service that lets you run containerized applications on Azure without having to worry about the complexity of managing the cluster. It’s a great way to scale your apps and take advantage of the benefits of Kubernetes, such as high availability, load balancing, and service discovery.

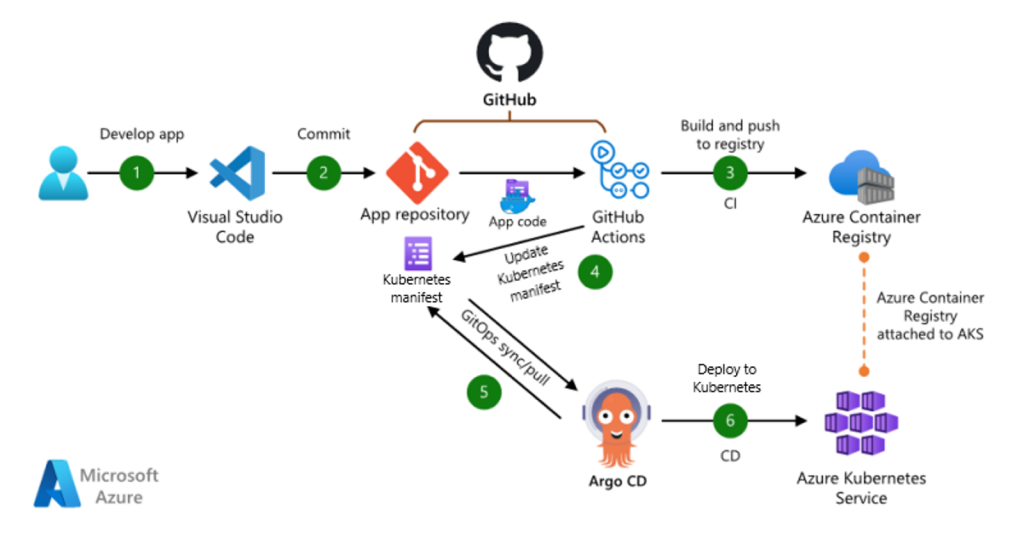

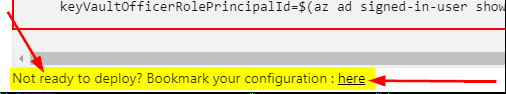

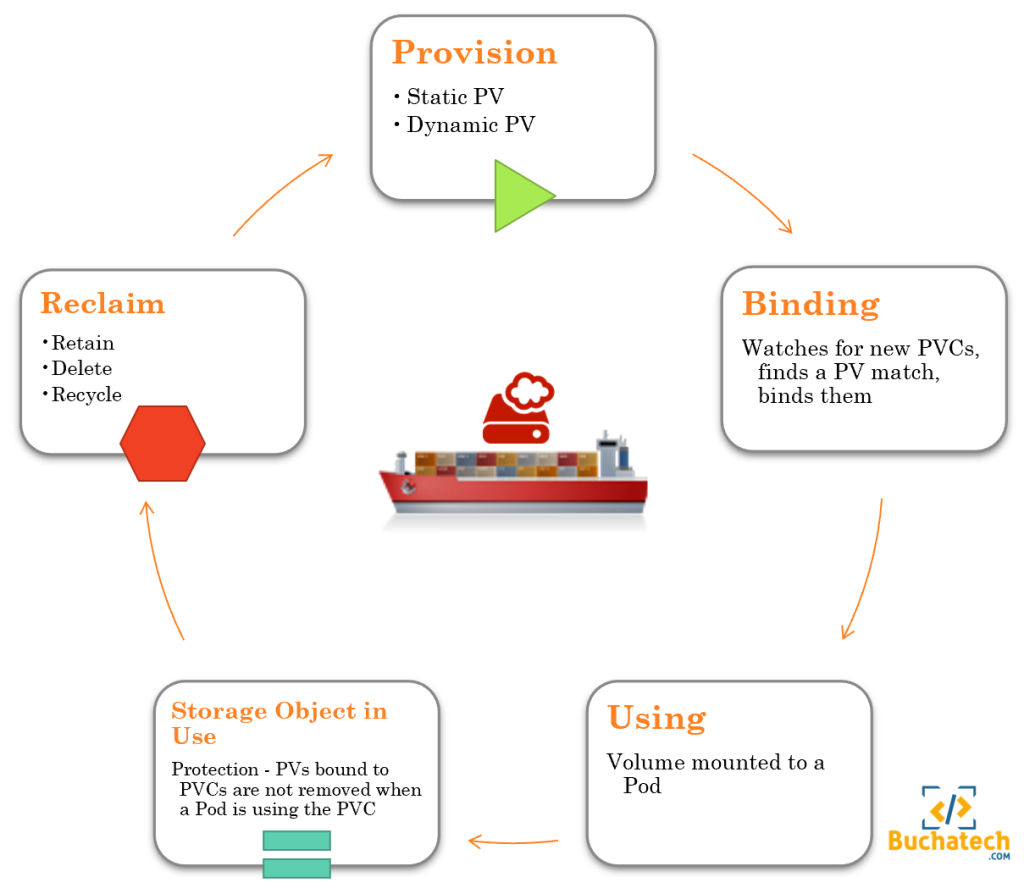

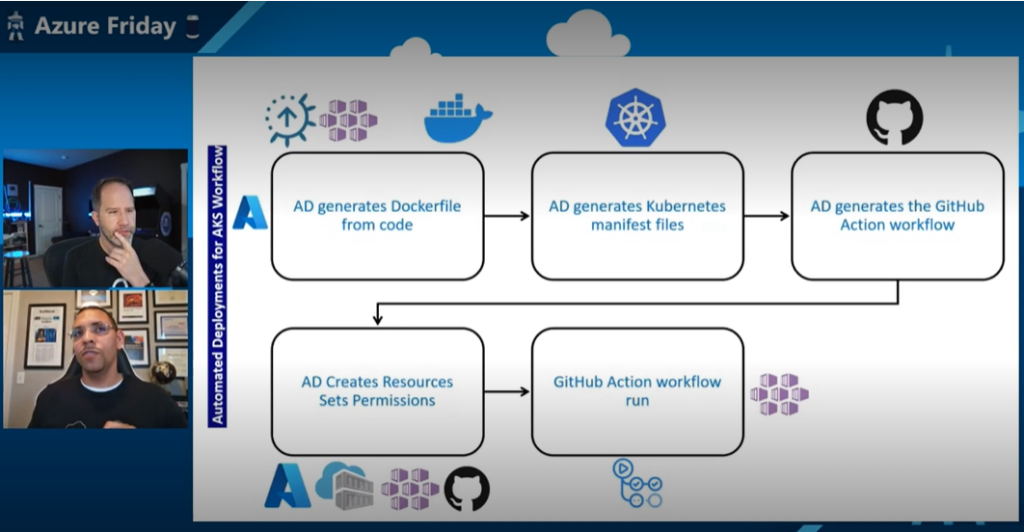

But what if you’re not familiar with containers or Kubernetes? What if you just have some code in a GitHub repo and you want to run it on AKS? That’s where Automated Deployments for AKS come in. It’s a feature that simplifies the Kubernetes development process by taking care of the tedious work of containerization for you. It uses a tool called Draft, which automatically detects the language and framework of your app, creates a Dockerfile and a Helm chart for you, builds and pushes the image to Azure Container Registry, and deploys the app to AKS. All with just a few clicks in the Azure Portal.

Sounds amazing, right? Well, that’s what I wanted to show Scott in this episode. I had an app hosted in a GitHub repo that I wanted to run on AKS. The app was a simple web app that displayed some data from a database. I had already created a few resources in Azure, such as a resource group, an Azure Container Registry, and an AKS cluster. All I needed to do was use Automated Deployments for AKS to get this app from code to running on a cluster.

So how did it go? Well, you’ll have to watch the episode to find out. But spoiler alert: it was super easy and fast. In just a few commands, I went from code to an app running on AKS. Scott was impressed and so was I. We had a great time chatting about how Automated Deployments for AKS works under the hood, some of the benefits and limitations of using it, and how it can help developers get started with containers and Kubernetes.

Check out the episode here:

With Automated Deployments, Microsoft is opening up new avenues for developers to embrace the power of containers and AKS, enabling them to effortlessly build scalable and robust applications.

If you’re interested in learning more about Automated Deployments for AKS, you can check out the documentation here: https://learn.microsoft.com/en-us/azure/aks/automated-deployments. It’s available today in public preview, so you can try it out for yourself and see how easy it is to run your apps on AKS.

That’s all for today. I hope you enjoy this episode of Azure Friday as much as I did. It was an honor and a pleasure to be a guest on Scott’s show and talk about one of my favorite topics: Azure Kubernetes Service. If you have any questions or feedback, feel free to leave a comment or reach out to me on Twitter at @Buchatech. Thanks for reading and happy coding!